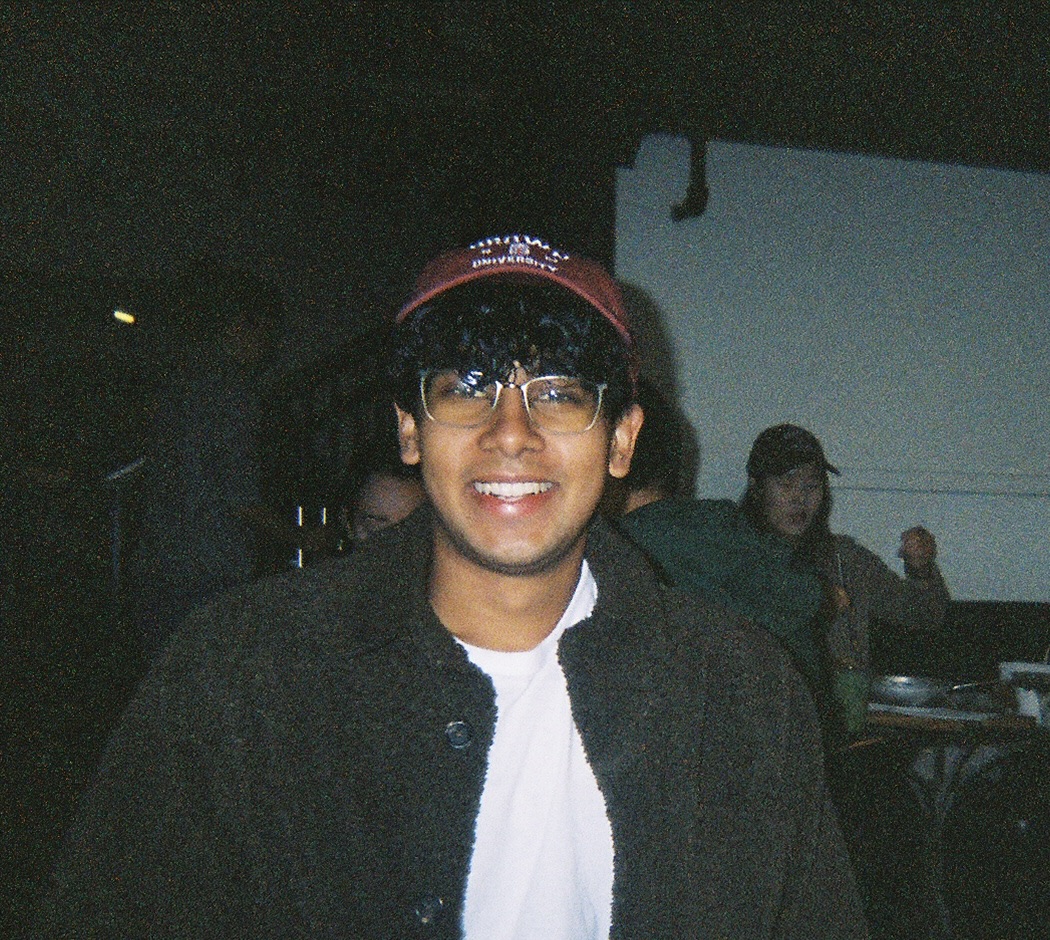

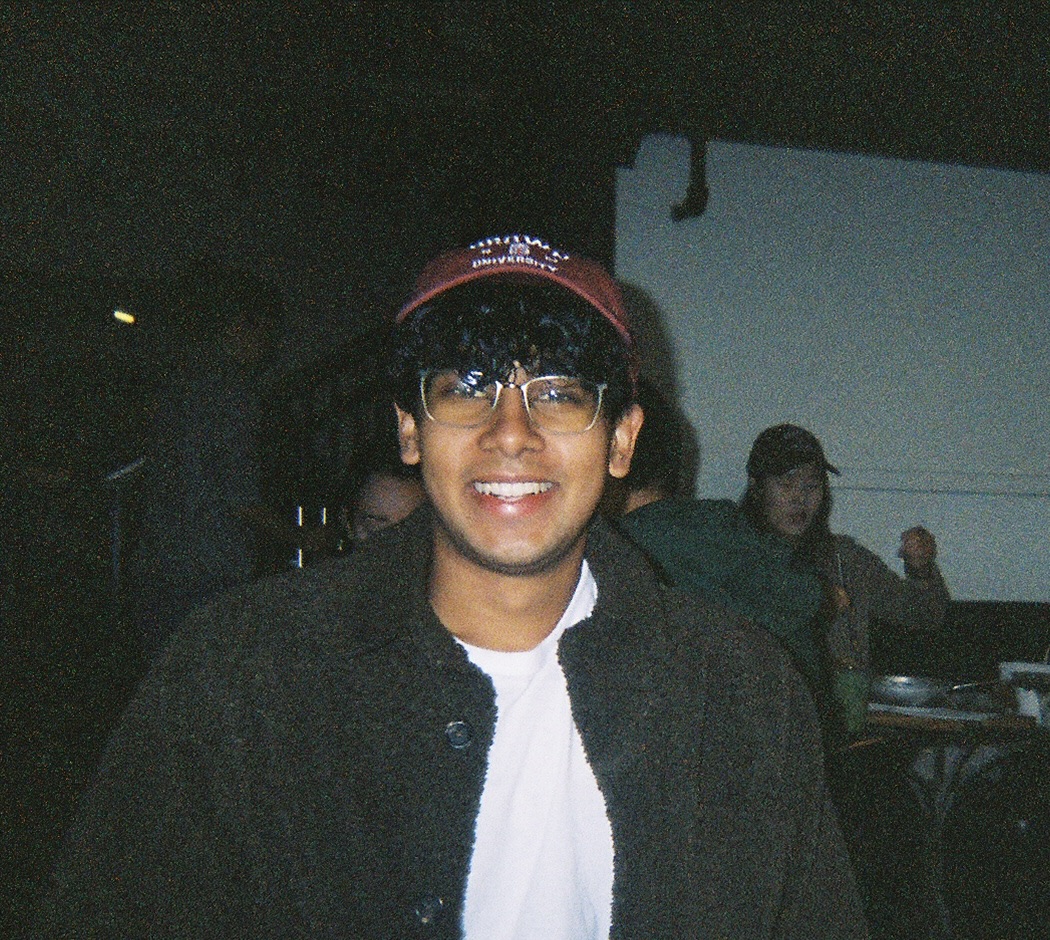

Kunal Handa

I am a Research Scientist and Member of the Technical Staff at Anthropic. I focus on improving the societal impact of AI systems.

You can contact me at: kunal [underscore] handa [at] alumni [dot] brown [dot] edu.

I am a Research Scientist and Member of the Technical Staff at Anthropic. I focus on improving the societal impact of AI systems.

You can contact me at: kunal [underscore] handa [at] alumni [dot] brown [dot] edu.